mirror of

https://github.com/minio/minio.git

synced 2026-02-09 04:10:15 -05:00

Compare commits

85 Commits

key-versio

...

dependabot

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

c882488993 | ||

|

|

58659f26f4 | ||

|

|

3a0cc6c86e | ||

|

|

10b0a234d2 | ||

|

|

18f97e70b1 | ||

|

|

52eee5a2f1 | ||

|

|

c6d3aac5c4 | ||

|

|

fa18589d1c | ||

|

|

05e569960a | ||

|

|

9e49d5e7a6 | ||

|

|

c1a49490c7 | ||

|

|

334c313da4 | ||

|

|

1b8ac0af9f | ||

|

|

ba3c0fd1c7 | ||

|

|

d51a4a4ff6 | ||

|

|

62383dfbfe | ||

|

|

bde0d5a291 | ||

|

|

534f4a9fb1 | ||

|

|

b8631cf531 | ||

|

|

456d9462e5 | ||

|

|

756f3c8142 | ||

|

|

7a80ec1cce | ||

|

|

ae71d76901 | ||

|

|

07c3a429bf | ||

|

|

0cde982902 | ||

|

|

d0f50cdd9b | ||

|

|

da532ab93d | ||

|

|

558fc1c09c | ||

|

|

9fdbf6fe83 | ||

|

|

5c87d4ae87 | ||

|

|

f0b91e5504 | ||

|

|

3b7cb6512c | ||

|

|

4ea6f3b06b | ||

|

|

86d9d9b55e | ||

|

|

5a35585acd | ||

|

|

0848e69602 | ||

|

|

02ba581ecf | ||

|

|

b44b2a090c | ||

|

|

c7d6a9722d | ||

|

|

a8abdc797e | ||

|

|

0638ccc5f3 | ||

|

|

b1a34fd63f | ||

|

|

ffcfa36b13 | ||

|

|

376fbd11a7 | ||

|

|

c76f209ccc | ||

|

|

7a6a2256b1 | ||

|

|

d002beaee3 | ||

|

|

71f293d9ab | ||

|

|

e3d183b6a4 | ||

|

|

752abc2e2c | ||

|

|

b9f0e8c712 | ||

|

|

7ced9663e6 | ||

|

|

50fcf9b670 | ||

|

|

64f5c6103f | ||

|

|

e909be6380 | ||

|

|

83b2ad418b | ||

|

|

7a64bb9766 | ||

|

|

34679befef | ||

|

|

4021d8c8e2 | ||

|

|

de234b888c | ||

|

|

2718d9a430 | ||

|

|

a65292cab1 | ||

|

|

e0c79be251 | ||

|

|

a6c538c5a1 | ||

|

|

e1fcaebc77 | ||

|

|

21409f112d | ||

|

|

417c8648f0 | ||

|

|

e2245a0b12 | ||

|

|

b4b3d208dd | ||

|

|

0a36d41dcd | ||

|

|

ea77bcfc98 | ||

|

|

9f24ca5d66 | ||

|

|

816666a4c6 | ||

|

|

2c7fe094d1 | ||

|

|

9ebe168782 | ||

|

|

ee2028cde6 | ||

|

|

ecde75f911 | ||

|

|

12a6ea89cc | ||

|

|

63e102c049 | ||

|

|

160f8a901b | ||

|

|

ef9b03fbf5 | ||

|

|

1d50cae43d | ||

|

|

c0a33952c6 | ||

|

|

8cad40a483 | ||

|

|

6d18dba9a2 |

11

.github/ISSUE_TEMPLATE/bug_report.md

vendored

11

.github/ISSUE_TEMPLATE/bug_report.md

vendored

@@ -1,14 +1,19 @@

|

||||

---

|

||||

name: Bug report

|

||||

about: Create a report to help us improve

|

||||

about: Report a bug in MinIO (community edition is source-only)

|

||||

title: ''

|

||||

labels: community, triage

|

||||

assignees: ''

|

||||

|

||||

---

|

||||

|

||||

## NOTE

|

||||

If this case is urgent, please subscribe to [Subnet](https://min.io/pricing) so that our 24/7 support team may help you faster.

|

||||

## IMPORTANT NOTES

|

||||

|

||||

**Community Edition**: MinIO community edition is now source-only. Install via `go install github.com/minio/minio@latest`

|

||||

|

||||

**Feature Requests**: We are no longer accepting feature requests for the community edition. For feature requests and enterprise support, please subscribe to [MinIO Enterprise Support](https://min.io/pricing).

|

||||

|

||||

**Urgent Issues**: If this case is urgent or affects production, please subscribe to [SUBNET](https://min.io/pricing) for 24/7 enterprise support.

|

||||

|

||||

<!--- Provide a general summary of the issue in the Title above -->

|

||||

|

||||

|

||||

6

.github/ISSUE_TEMPLATE/config.yml

vendored

6

.github/ISSUE_TEMPLATE/config.yml

vendored

@@ -2,7 +2,7 @@ blank_issues_enabled: false

|

||||

contact_links:

|

||||

- name: MinIO Community Support

|

||||

url: https://slack.min.io

|

||||

about: Join here for Community Support

|

||||

- name: MinIO SUBNET Support

|

||||

about: Community support via Slack - for questions and discussions

|

||||

- name: MinIO Enterprise Support (SUBNET)

|

||||

url: https://min.io/pricing

|

||||

about: Join here for Enterprise Support

|

||||

about: Enterprise support with SLA - for production deployments and feature requests

|

||||

|

||||

20

.github/ISSUE_TEMPLATE/feature_request.md

vendored

20

.github/ISSUE_TEMPLATE/feature_request.md

vendored

@@ -1,20 +0,0 @@

|

||||

---

|

||||

name: Feature request

|

||||

about: Suggest an idea for this project

|

||||

title: ''

|

||||

labels: community, triage

|

||||

assignees: ''

|

||||

|

||||

---

|

||||

|

||||

**Is your feature request related to a problem? Please describe.**

|

||||

A clear and concise description of what the problem is. Ex. I'm always frustrated when [...]

|

||||

|

||||

**Describe the solution you'd like**

|

||||

A clear and concise description of what you want to happen.

|

||||

|

||||

**Describe alternatives you've considered**

|

||||

A clear and concise description of any alternative solutions or features you've considered.

|

||||

|

||||

**Additional context**

|

||||

Add any other context or screenshots about the feature request here.

|

||||

59

.github/workflows/go-fips.yml

vendored

59

.github/workflows/go-fips.yml

vendored

@@ -1,59 +0,0 @@

|

||||

name: FIPS Build Test

|

||||

|

||||

on:

|

||||

pull_request:

|

||||

branches:

|

||||

- master

|

||||

|

||||

# This ensures that previous jobs for the PR are canceled when the PR is

|

||||

# updated.

|

||||

concurrency:

|

||||

group: ${{ github.workflow }}-${{ github.head_ref }}

|

||||

cancel-in-progress: true

|

||||

|

||||

permissions:

|

||||

contents: read

|

||||

|

||||

jobs:

|

||||

build:

|

||||

name: Go BoringCrypto ${{ matrix.go-version }} on ${{ matrix.os }}

|

||||

runs-on: ${{ matrix.os }}

|

||||

strategy:

|

||||

matrix:

|

||||

go-version: [1.24.x]

|

||||

os: [ubuntu-latest]

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

- uses: actions/setup-go@v5

|

||||

with:

|

||||

go-version: ${{ matrix.go-version }}

|

||||

|

||||

- name: Set up Docker Buildx

|

||||

uses: docker/setup-buildx-action@v2

|

||||

|

||||

- name: Setup dockerfile for build test

|

||||

run: |

|

||||

GO_VERSION=$(go version | cut -d ' ' -f 3 | sed 's/go//')

|

||||

echo Detected go version $GO_VERSION

|

||||

cat > Dockerfile.fips.test <<EOF

|

||||

FROM golang:${GO_VERSION}

|

||||

COPY . /minio

|

||||

WORKDIR /minio

|

||||

ENV GOEXPERIMENT=boringcrypto

|

||||

RUN make

|

||||

EOF

|

||||

|

||||

- name: Build

|

||||

uses: docker/build-push-action@v3

|

||||

with:

|

||||

context: .

|

||||

file: Dockerfile.fips.test

|

||||

push: false

|

||||

load: true

|

||||

tags: minio/fips-test:latest

|

||||

|

||||

# This should fail if grep returns non-zero exit

|

||||

- name: Test binary

|

||||

run: |

|

||||

docker run --rm minio/fips-test:latest ./minio --version

|

||||

docker run --rm -i minio/fips-test:latest /bin/bash -c 'go tool nm ./minio | grep FIPS | grep -q FIPS'

|

||||

@@ -1,8 +1,14 @@

|

||||

FROM minio/minio:latest

|

||||

|

||||

ARG TARGETARCH

|

||||

ARG RELEASE

|

||||

|

||||

RUN chmod -R 777 /usr/bin

|

||||

|

||||

COPY ./minio /usr/bin/minio

|

||||

COPY ./minio-${TARGETARCH}.${RELEASE} /usr/bin/minio

|

||||

COPY ./minio-${TARGETARCH}.${RELEASE}.minisig /usr/bin/minio.minisig

|

||||

COPY ./minio-${TARGETARCH}.${RELEASE}.sha256sum /usr/bin/minio.sha256sum

|

||||

|

||||

COPY dockerscripts/docker-entrypoint.sh /usr/bin/docker-entrypoint.sh

|

||||

|

||||

ENTRYPOINT ["/usr/bin/docker-entrypoint.sh"]

|

||||

|

||||

93

PULL_REQUESTS_ETIQUETTE.md

Normal file

93

PULL_REQUESTS_ETIQUETTE.md

Normal file

@@ -0,0 +1,93 @@

|

||||

# MinIO Pull Request Guidelines

|

||||

|

||||

These guidelines ensure high-quality commits in MinIO’s GitHub repositories, maintaining

|

||||

a clear, valuable commit history for our open-source projects. They apply to all contributors,

|

||||

fostering efficient reviews and robust code.

|

||||

|

||||

## Why Pull Requests?

|

||||

|

||||

Pull Requests (PRs) drive quality in MinIO’s codebase by:

|

||||

- Enabling peer review without pair programming.

|

||||

- Documenting changes for future reference.

|

||||

- Ensuring commits tell a clear story of development.

|

||||

|

||||

**A poor commit lasts forever, even if code is refactored.**

|

||||

|

||||

## Crafting a Quality PR

|

||||

|

||||

A strong MinIO PR:

|

||||

- Delivers a complete, valuable change (feature, bug fix, or improvement).

|

||||

- Has a concise title (e.g., `[S3] Fix bucket policy parsing #1234`) and a summary with context, referencing issues (e.g., `#1234`).

|

||||

- Contains well-written, logical commits explaining *why* changes were made (e.g., “Add S3 bucket tagging support so that users can organize resources efficiently”).

|

||||

- Is small, focused, and easy to review—ideally one commit, unless multiple commits better narrate complex work.

|

||||

- Adheres to MinIO’s coding standards (e.g., Go style, error handling, testing).

|

||||

|

||||

PRs must flow smoothly through review to reach production. Large PRs should be split into smaller, manageable ones.

|

||||

|

||||

## Submitting PRs

|

||||

|

||||

1. **Title and Summary**:

|

||||

- Use a scannable title: `[Subsystem] Action Description #Issue` (e.g., `[IAM] Add role-based access control #567`).

|

||||

- Include context in the summary: what changed, why, and any issue references.

|

||||

- Use `[WIP]` for in-progress PRs to avoid premature merging or choose GitHub draft PRs.

|

||||

|

||||

2. **Commits**:

|

||||

- Write clear messages: what changed and why (e.g., “Refactor S3 API handler to reduce latency so that requests process 20% faster”).

|

||||

- Rebase to tidy commits before submitting (e.g., `git rebase -i main` to squash typos or reword messages), unless multiple contributors worked on the branch.

|

||||

- Keep PRs focused—one feature or fix. Split large changes into multiple PRs.

|

||||

|

||||

3. **Testing**:

|

||||

- Include unit tests for new functionality or bug fixes.

|

||||

- Ensure existing tests pass (`make test`).

|

||||

- Document testing steps in the PR summary if manual testing was performed.

|

||||

|

||||

4. **Before Submitting**:

|

||||

- Run `make verify` to check formatting, linting, and tests.

|

||||

- Reference related issues (e.g., “Closes #1234”).

|

||||

- Notify team members via GitHub `@mentions` if urgent or complex.

|

||||

|

||||

## Reviewing PRs

|

||||

|

||||

Reviewers ensure MinIO’s commit history remains a clear, reliable record. Responsibilities include:

|

||||

|

||||

1. **Commit Quality**:

|

||||

- Verify each commit explains *why* the change was made (e.g., “So that…”).

|

||||

- Request rebasing if commits are unclear, redundant, or lack context (e.g., “Please squash typo fixes into the parent commit”).

|

||||

|

||||

2. **Code Quality**:

|

||||

- Check adherence to MinIO’s Go standards (e.g., error handling, documentation).

|

||||

- Ensure tests cover new code and pass CI.

|

||||

- Flag bugs or critical issues for immediate fixes; suggest non-blocking improvements as follow-up issues.

|

||||

|

||||

3. **Flow**:

|

||||

- Review promptly to avoid blocking progress.

|

||||

- Balance quality and speed—minor issues can be addressed later via issues, not PR blocks.

|

||||

- If unable to complete the review, tag another reviewer (e.g., `@username please take over`).

|

||||

|

||||

4. **Shared Responsibility**:

|

||||

- All MinIO contributors are reviewers. The first commenter on a PR owns the review unless they delegate.

|

||||

- Multiple reviewers are encouraged for complex PRs.

|

||||

|

||||

5. **No Self-Edits**:

|

||||

- Don’t modify the PR directly (e.g., fixing bugs). Request changes from the submitter or create a follow-up PR.

|

||||

- If you edit, you’re a collaborator, not a reviewer, and cannot merge.

|

||||

|

||||

6. **Testing**:

|

||||

- Assume the submitter tested the code. If testing is unclear, ask for details (e.g., “How was this tested?”).

|

||||

- Reject untested PRs unless testing is infeasible, then assist with test setup.

|

||||

|

||||

## Tips for Success

|

||||

|

||||

- **Small PRs**: Easier to review, faster to merge. Split large changes logically.

|

||||

- **Clear Commits**: Use `git rebase -i` to refine history before submitting.

|

||||

- **Engage Early**: Discuss complex changes in issues or Slack (https://slack.min.io) before coding.

|

||||

- **Be Responsive**: Address reviewer feedback promptly to keep PRs moving.

|

||||

- **Learn from Reviews**: Use feedback to improve future contributions.

|

||||

|

||||

## Resources

|

||||

|

||||

- [MinIO Coding Standards](https://github.com/minio/minio/blob/master/CONTRIBUTING.md)

|

||||

- [Effective Commit Messages](https://mislav.net/2014/02/hidden-documentation/)

|

||||

- [GitHub PR Tips](https://github.com/blog/1943-how-to-write-the-perfect-pull-request)

|

||||

|

||||

By following these guidelines, we ensure MinIO’s codebase remains high-quality, maintainable, and a joy to contribute to. Happy coding!

|

||||

@@ -1,7 +0,0 @@

|

||||

# MinIO FIPS Builds

|

||||

|

||||

MinIO creates FIPS builds using a patched version of the Go compiler (that uses BoringCrypto, from BoringSSL, which is [FIPS 140-2 validated](https://csrc.nist.gov/csrc/media/projects/cryptographic-module-validation-program/documents/security-policies/140sp2964.pdf)) published by the Golang Team [here](https://github.com/golang/go/tree/dev.boringcrypto/misc/boring).

|

||||

|

||||

MinIO FIPS executables are available at <http://dl.min.io> - they are only published for `linux-amd64` architecture as binary files with the suffix `.fips`. We also publish corresponding container images to our official image repositories.

|

||||

|

||||

We are not making any statements or representations about the suitability of this code or build in relation to the FIPS 140-2 standard. Interested users will have to evaluate for themselves whether this is useful for their own purposes.

|

||||

267

README.md

267

README.md

@@ -4,253 +4,154 @@

|

||||

|

||||

[](https://min.io)

|

||||

|

||||

MinIO is a High Performance Object Storage released under GNU Affero General Public License v3.0. It is API compatible with Amazon S3 cloud storage service. Use MinIO to build high performance infrastructure for machine learning, analytics and application data workloads. To learn more about what MinIO is doing for AI storage, go to [AI storage documentation](https://min.io/solutions/object-storage-for-ai).

|

||||

MinIO is a high-performance, S3-compatible object storage solution released under the GNU AGPL v3.0 license.

|

||||

Designed for speed and scalability, it powers AI/ML, analytics, and data-intensive workloads with industry-leading performance.

|

||||

|

||||

This README provides quickstart instructions on running MinIO on bare metal hardware, including container-based installations. For Kubernetes environments, use the [MinIO Kubernetes Operator](https://github.com/minio/operator/blob/master/README.md).

|

||||

- S3 API Compatible – Seamless integration with existing S3 tools

|

||||

- Built for AI & Analytics – Optimized for large-scale data pipelines

|

||||

- High Performance – Ideal for demanding storage workloads.

|

||||

|

||||

## Container Installation

|

||||

This README provides instructions for building MinIO from source and deploying onto baremetal hardware.

|

||||

Use the [MinIO Documentation](https://github.com/minio/docs) project to build and host a local copy of the documentation.

|

||||

|

||||

Use the following commands to run a standalone MinIO server as a container.

|

||||

## MinIO is Open Source Software

|

||||

|

||||

Standalone MinIO servers are best suited for early development and evaluation. Certain features such as versioning, object locking, and bucket replication

|

||||

require distributed deploying MinIO with Erasure Coding. For extended development and production, deploy MinIO with Erasure Coding enabled - specifically,

|

||||

with a *minimum* of 4 drives per MinIO server. See [MinIO Erasure Code Overview](https://min.io/docs/minio/linux/operations/concepts/erasure-coding.html)

|

||||

for more complete documentation.

|

||||

We designed MinIO as Open Source software for the Open Source software community. We encourage the community to remix, redesign, and reshare MinIO under the terms of the AGPLv3 license.

|

||||

|

||||

### Stable

|

||||

All usage of MinIO in your application stack requires validation against AGPLv3 obligations, which include but are not limited to the release of modified code to the community from which you have benefited. Any commercial/proprietary usage of the AGPLv3 software, including repackaging or reselling services/features, is done at your own risk.

|

||||

|

||||

Run the following command to run the latest stable image of MinIO as a container using an ephemeral data volume:

|

||||

The AGPLv3 provides no obligation by any party to support, maintain, or warranty the original or any modified work.

|

||||

All support is provided on a best-effort basis through Github and our [Slack](https//slack.min.io) channel, and any member of the community is welcome to contribute and assist others in their usage of the software.

|

||||

|

||||

```sh

|

||||

podman run -p 9000:9000 -p 9001:9001 \

|

||||

quay.io/minio/minio server /data --console-address ":9001"

|

||||

```

|

||||

MinIO [AIStor](https://www.min.io/product/aistor) includes enterprise-grade support and licensing for workloads which require commercial or proprietary usage and production-level SLA/SLO-backed support. For more information, [reach out for a quote](https://min.io/pricing).

|

||||

|

||||

The MinIO deployment starts using default root credentials `minioadmin:minioadmin`. You can test the deployment using the MinIO Console, an embedded

|

||||

object browser built into MinIO Server. Point a web browser running on the host machine to <http://127.0.0.1:9000> and log in with the

|

||||

root credentials. You can use the Browser to create buckets, upload objects, and browse the contents of the MinIO server.

|

||||

## Source-Only Distribution

|

||||

|

||||

You can also connect using any S3-compatible tool, such as the MinIO Client `mc` commandline tool. See

|

||||

[Test using MinIO Client `mc`](#test-using-minio-client-mc) for more information on using the `mc` commandline tool. For application developers,

|

||||

see <https://min.io/docs/minio/linux/developers/minio-drivers.html> to view MinIO SDKs for supported languages.

|

||||

**Important:** The MinIO community edition is now distributed as source code only. We will no longer provide pre-compiled binary releases for the community version.

|

||||

|

||||

> NOTE: To deploy MinIO on with persistent storage, you must map local persistent directories from the host OS to the container using the `podman -v` option. For example, `-v /mnt/data:/data` maps the host OS drive at `/mnt/data` to `/data` on the container.

|

||||

### Installing Latest MinIO Community Edition

|

||||

|

||||

## macOS

|

||||

To use MinIO community edition, you have two options:

|

||||

|

||||

Use the following commands to run a standalone MinIO server on macOS.

|

||||

1. **Install from source** using `go install github.com/minio/minio@latest` (recommended)

|

||||

2. **Build a Docker image** from the provided Dockerfile

|

||||

|

||||

Standalone MinIO servers are best suited for early development and evaluation. Certain features such as versioning, object locking, and bucket replication require distributed deploying MinIO with Erasure Coding. For extended development and production, deploy MinIO with Erasure Coding enabled - specifically, with a *minimum* of 4 drives per MinIO server. See [MinIO Erasure Code Overview](https://min.io/docs/minio/linux/operations/concepts/erasure-coding.html) for more complete documentation.

|

||||

See the sections below for detailed instructions on each method.

|

||||

|

||||

### Homebrew (recommended)

|

||||

### Legacy Binary Releases

|

||||

|

||||

Run the following command to install the latest stable MinIO package using [Homebrew](https://brew.sh/). Replace ``/data`` with the path to the drive or directory in which you want MinIO to store data.

|

||||

Historical pre-compiled binary releases remain available for reference but are no longer maintained:

|

||||

- GitHub Releases: https://github.com/minio/minio/releases

|

||||

- Direct downloads: https://dl.min.io/server/minio/release/

|

||||

|

||||

```sh

|

||||

brew install minio/stable/minio

|

||||

minio server /data

|

||||

```

|

||||

|

||||

> NOTE: If you previously installed minio using `brew install minio` then it is recommended that you reinstall minio from `minio/stable/minio` official repo instead.

|

||||

|

||||

```sh

|

||||

brew uninstall minio

|

||||

brew install minio/stable/minio

|

||||

```

|

||||

|

||||

The MinIO deployment starts using default root credentials `minioadmin:minioadmin`. You can test the deployment using the MinIO Console, an embedded web-based object browser built into MinIO Server. Point a web browser running on the host machine to <http://127.0.0.1:9000> and log in with the root credentials. You can use the Browser to create buckets, upload objects, and browse the contents of the MinIO server.

|

||||

|

||||

You can also connect using any S3-compatible tool, such as the MinIO Client `mc` commandline tool. See [Test using MinIO Client `mc`](#test-using-minio-client-mc) for more information on using the `mc` commandline tool. For application developers, see <https://min.io/docs/minio/linux/developers/minio-drivers.html/> to view MinIO SDKs for supported languages.

|

||||

|

||||

### Binary Download

|

||||

|

||||

Use the following command to download and run a standalone MinIO server on macOS. Replace ``/data`` with the path to the drive or directory in which you want MinIO to store data.

|

||||

|

||||

```sh

|

||||

wget https://dl.min.io/server/minio/release/darwin-amd64/minio

|

||||

chmod +x minio

|

||||

./minio server /data

|

||||

```

|

||||

|

||||

The MinIO deployment starts using default root credentials `minioadmin:minioadmin`. You can test the deployment using the MinIO Console, an embedded web-based object browser built into MinIO Server. Point a web browser running on the host machine to <http://127.0.0.1:9000> and log in with the root credentials. You can use the Browser to create buckets, upload objects, and browse the contents of the MinIO server.

|

||||

|

||||

You can also connect using any S3-compatible tool, such as the MinIO Client `mc` commandline tool. See [Test using MinIO Client `mc`](#test-using-minio-client-mc) for more information on using the `mc` commandline tool. For application developers, see <https://min.io/docs/minio/linux/developers/minio-drivers.html> to view MinIO SDKs for supported languages.

|

||||

|

||||

## GNU/Linux

|

||||

|

||||

Use the following command to run a standalone MinIO server on Linux hosts running 64-bit Intel/AMD architectures. Replace ``/data`` with the path to the drive or directory in which you want MinIO to store data.

|

||||

|

||||

```sh

|

||||

wget https://dl.min.io/server/minio/release/linux-amd64/minio

|

||||

chmod +x minio

|

||||

./minio server /data

|

||||

```

|

||||

|

||||

The following table lists supported architectures. Replace the `wget` URL with the architecture for your Linux host.

|

||||

|

||||

| Architecture | URL |

|

||||

| -------- | ------ |

|

||||

| 64-bit Intel/AMD | <https://dl.min.io/server/minio/release/linux-amd64/minio> |

|

||||

| 64-bit ARM | <https://dl.min.io/server/minio/release/linux-arm64/minio> |

|

||||

| 64-bit PowerPC LE (ppc64le) | <https://dl.min.io/server/minio/release/linux-ppc64le/minio> |

|

||||

|

||||

The MinIO deployment starts using default root credentials `minioadmin:minioadmin`. You can test the deployment using the MinIO Console, an embedded web-based object browser built into MinIO Server. Point a web browser running on the host machine to <http://127.0.0.1:9000> and log in with the root credentials. You can use the Browser to create buckets, upload objects, and browse the contents of the MinIO server.

|

||||

|

||||

You can also connect using any S3-compatible tool, such as the MinIO Client `mc` commandline tool. See [Test using MinIO Client `mc`](#test-using-minio-client-mc) for more information on using the `mc` commandline tool. For application developers, see <https://min.io/docs/minio/linux/developers/minio-drivers.html> to view MinIO SDKs for supported languages.

|

||||

|

||||

> NOTE: Standalone MinIO servers are best suited for early development and evaluation. Certain features such as versioning, object locking, and bucket replication require distributed deploying MinIO with Erasure Coding. For extended development and production, deploy MinIO with Erasure Coding enabled - specifically, with a *minimum* of 4 drives per MinIO server. See [MinIO Erasure Code Overview](https://min.io/docs/minio/linux/operations/concepts/erasure-coding.html#) for more complete documentation.

|

||||

|

||||

## Microsoft Windows

|

||||

|

||||

To run MinIO on 64-bit Windows hosts, download the MinIO executable from the following URL:

|

||||

|

||||

```sh

|

||||

https://dl.min.io/server/minio/release/windows-amd64/minio.exe

|

||||

```

|

||||

|

||||

Use the following command to run a standalone MinIO server on the Windows host. Replace ``D:\`` with the path to the drive or directory in which you want MinIO to store data. You must change the terminal or powershell directory to the location of the ``minio.exe`` executable, *or* add the path to that directory to the system ``$PATH``:

|

||||

|

||||

```sh

|

||||

minio.exe server D:\

|

||||

```

|

||||

|

||||

The MinIO deployment starts using default root credentials `minioadmin:minioadmin`. You can test the deployment using the MinIO Console, an embedded web-based object browser built into MinIO Server. Point a web browser running on the host machine to <http://127.0.0.1:9000> and log in with the root credentials. You can use the Browser to create buckets, upload objects, and browse the contents of the MinIO server.

|

||||

|

||||

You can also connect using any S3-compatible tool, such as the MinIO Client `mc` commandline tool. See [Test using MinIO Client `mc`](#test-using-minio-client-mc) for more information on using the `mc` commandline tool. For application developers, see <https://min.io/docs/minio/linux/developers/minio-drivers.html> to view MinIO SDKs for supported languages.

|

||||

|

||||

> NOTE: Standalone MinIO servers are best suited for early development and evaluation. Certain features such as versioning, object locking, and bucket replication require distributed deploying MinIO with Erasure Coding. For extended development and production, deploy MinIO with Erasure Coding enabled - specifically, with a *minimum* of 4 drives per MinIO server. See [MinIO Erasure Code Overview](https://min.io/docs/minio/linux/operations/concepts/erasure-coding.html#) for more complete documentation.

|

||||

**These legacy binaries will not receive updates.** We strongly recommend using source builds for access to the latest features, bug fixes, and security updates.

|

||||

|

||||

## Install from Source

|

||||

|

||||

Use the following commands to compile and run a standalone MinIO server from source. Source installation is only intended for developers and advanced users. If you do not have a working Golang environment, please follow [How to install Golang](https://golang.org/doc/install). Minimum version required is [go1.24](https://golang.org/dl/#stable)

|

||||

Use the following commands to compile and run a standalone MinIO server from source.

|

||||

If you do not have a working Golang environment, please follow [How to install Golang](https://golang.org/doc/install). Minimum version required is [go1.24](https://golang.org/dl/#stable)

|

||||

|

||||

```sh

|

||||

go install github.com/minio/minio@latest

|

||||

```

|

||||

|

||||

The MinIO deployment starts using default root credentials `minioadmin:minioadmin`. You can test the deployment using the MinIO Console, an embedded web-based object browser built into MinIO Server. Point a web browser running on the host machine to <http://127.0.0.1:9000> and log in with the root credentials. You can use the Browser to create buckets, upload objects, and browse the contents of the MinIO server.

|

||||

You can alternatively run `go build` and use the `GOOS` and `GOARCH` environment variables to control the OS and architecture target.

|

||||

For example:

|

||||

|

||||

You can also connect using any S3-compatible tool, such as the MinIO Client `mc` commandline tool. See [Test using MinIO Client `mc`](#test-using-minio-client-mc) for more information on using the `mc` commandline tool. For application developers, see <https://min.io/docs/minio/linux/developers/minio-drivers.html> to view MinIO SDKs for supported languages.

|

||||

|

||||

> NOTE: Standalone MinIO servers are best suited for early development and evaluation. Certain features such as versioning, object locking, and bucket replication require distributed deploying MinIO with Erasure Coding. For extended development and production, deploy MinIO with Erasure Coding enabled - specifically, with a *minimum* of 4 drives per MinIO server. See [MinIO Erasure Code Overview](https://min.io/docs/minio/linux/operations/concepts/erasure-coding.html) for more complete documentation.

|

||||

|

||||

MinIO strongly recommends *against* using compiled-from-source MinIO servers for production environments.

|

||||

|

||||

## Deployment Recommendations

|

||||

|

||||

### Allow port access for Firewalls

|

||||

|

||||

By default MinIO uses the port 9000 to listen for incoming connections. If your platform blocks the port by default, you may need to enable access to the port.

|

||||

|

||||

### ufw

|

||||

|

||||

For hosts with ufw enabled (Debian based distros), you can use `ufw` command to allow traffic to specific ports. Use below command to allow access to port 9000

|

||||

|

||||

```sh

|

||||

ufw allow 9000

|

||||

```

|

||||

env GOOS=linux GOARCh=arm64 go build

|

||||

```

|

||||

|

||||

Below command enables all incoming traffic to ports ranging from 9000 to 9010.

|

||||

Start MinIO by running `minio server PATH` where `PATH` is any empty folder on your local filesystem.

|

||||

|

||||

The MinIO deployment starts using default root credentials `minioadmin:minioadmin`.

|

||||

You can test the deployment using the MinIO Console, an embedded web-based object browser built into MinIO Server.

|

||||

Point a web browser running on the host machine to <http://127.0.0.1:9000> and log in with the root credentials.

|

||||

You can use the Browser to create buckets, upload objects, and browse the contents of the MinIO server.

|

||||

|

||||

You can also connect using any S3-compatible tool, such as the MinIO Client `mc` commandline tool:

|

||||

|

||||

```sh

|

||||

ufw allow 9000:9010/tcp

|

||||

mc alias set local http://localhost:9000 minioadmin minioadmin

|

||||

mc admin info local

|

||||

```

|

||||

|

||||

### firewall-cmd

|

||||

See [Test using MinIO Client `mc`](#test-using-minio-client-mc) for more information on using the `mc` commandline tool.

|

||||

For application developers, see <https://docs.min.io/enterprise/aistor-object-store/developers/sdk/> to view MinIO SDKs for supported languages.

|

||||

|

||||

For hosts with firewall-cmd enabled (CentOS), you can use `firewall-cmd` command to allow traffic to specific ports. Use below commands to allow access to port 9000

|

||||

> [!NOTE]

|

||||

> Production environments using compiled-from-source MinIO binaries do so at their own risk.

|

||||

> The AGPLv3 license provides no warranties nor liabilites for any such usage.

|

||||

|

||||

## Build Docker Image

|

||||

|

||||

You can use the `docker build .` command to build a Docker image on your local host machine.

|

||||

You must first [build MinIO](#install-from-source) and ensure the `minio` binary exists in the project root.

|

||||

|

||||

The following command builds the Docker image using the default `Dockerfile` in the root project directory with the repository and image tag `myminio:minio`

|

||||

|

||||

```sh

|

||||

firewall-cmd --get-active-zones

|

||||

docker build -t myminio:minio .

|

||||

```

|

||||

|

||||

This command gets the active zone(s). Now, apply port rules to the relevant zones returned above. For example if the zone is `public`, use

|

||||

Use `docker image ls` to confirm the image exists in your local repository.

|

||||

You can run the server using standard Docker invocation:

|

||||

|

||||

```sh

|

||||

firewall-cmd --zone=public --add-port=9000/tcp --permanent

|

||||

docker run -p 9000:9000 -p 9001:9001 myminio:minio server /tmp/minio --console-address :9001

|

||||

```

|

||||

|

||||

Note that `permanent` makes sure the rules are persistent across firewall start, restart or reload. Finally reload the firewall for changes to take effect.

|

||||

Complete documentation for building Docker containers, managing custom images, or loading images into orchestration platforms is out of scope for this documentation.

|

||||

You can modify the `Dockerfile` and `dockerscripts/docker-entrypoint.sh` as-needed to reflect your specific image requirements.

|

||||

|

||||

```sh

|

||||

firewall-cmd --reload

|

||||

```

|

||||

See the [MinIO Container](https://docs.min.io/community/minio-object-store/operations/deployments/baremetal-deploy-minio-as-a-container.html#deploy-minio-container) documentation for more guidance on running MinIO within a Container image.

|

||||

|

||||

### iptables

|

||||

## Install using Helm Charts

|

||||

|

||||

For hosts with iptables enabled (RHEL, CentOS, etc), you can use `iptables` command to enable all traffic coming to specific ports. Use below command to allow

|

||||

access to port 9000

|

||||

There are two paths for installing MinIO onto Kubernetes infrastructure:

|

||||

|

||||

```sh

|

||||

iptables -A INPUT -p tcp --dport 9000 -j ACCEPT

|

||||

service iptables restart

|

||||

```

|

||||

- Use the [MinIO Operator](https://github.com/minio/operator)

|

||||

- Use the community-maintained [Helm charts](https://github.com/minio/minio/tree/master/helm/minio)

|

||||

|

||||

Below command enables all incoming traffic to ports ranging from 9000 to 9010.

|

||||

|

||||

```sh

|

||||

iptables -A INPUT -p tcp --dport 9000:9010 -j ACCEPT

|

||||

service iptables restart

|

||||

```

|

||||

See the [MinIO Documentation](https://docs.min.io/community/minio-object-store/operations/deployments/kubernetes.html) for guidance on deploying using the Operator.

|

||||

The Community Helm chart has instructions in the folder-level README.

|

||||

|

||||

## Test MinIO Connectivity

|

||||

|

||||

### Test using MinIO Console

|

||||

|

||||

MinIO Server comes with an embedded web based object browser. Point your web browser to <http://127.0.0.1:9000> to ensure your server has started successfully.

|

||||

MinIO Server comes with an embedded web based object browser.

|

||||

Point your web browser to <http://127.0.0.1:9000> to ensure your server has started successfully.

|

||||

|

||||

> NOTE: MinIO runs console on random port by default, if you wish to choose a specific port use `--console-address` to pick a specific interface and port.

|

||||

> [!NOTE]

|

||||

> MinIO runs console on random port by default, if you wish to choose a specific port use `--console-address` to pick a specific interface and port.

|

||||

|

||||

### Things to consider

|

||||

### Test using MinIO Client `mc`

|

||||

|

||||

MinIO redirects browser access requests to the configured server port (i.e. `127.0.0.1:9000`) to the configured Console port. MinIO uses the hostname or IP address specified in the request when building the redirect URL. The URL and port *must* be accessible by the client for the redirection to work.

|

||||

`mc` provides a modern alternative to UNIX commands like ls, cat, cp, mirror, diff etc. It supports filesystems and Amazon S3 compatible cloud storage services.

|

||||

|

||||

For deployments behind a load balancer, proxy, or ingress rule where the MinIO host IP address or port is not public, use the `MINIO_BROWSER_REDIRECT_URL` environment variable to specify the external hostname for the redirect. The LB/Proxy must have rules for directing traffic to the Console port specifically.

|

||||

|

||||

For example, consider a MinIO deployment behind a proxy `https://minio.example.net`, `https://console.minio.example.net` with rules for forwarding traffic on port :9000 and :9001 to MinIO and the MinIO Console respectively on the internal network. Set `MINIO_BROWSER_REDIRECT_URL` to `https://console.minio.example.net` to ensure the browser receives a valid reachable URL.

|

||||

|

||||

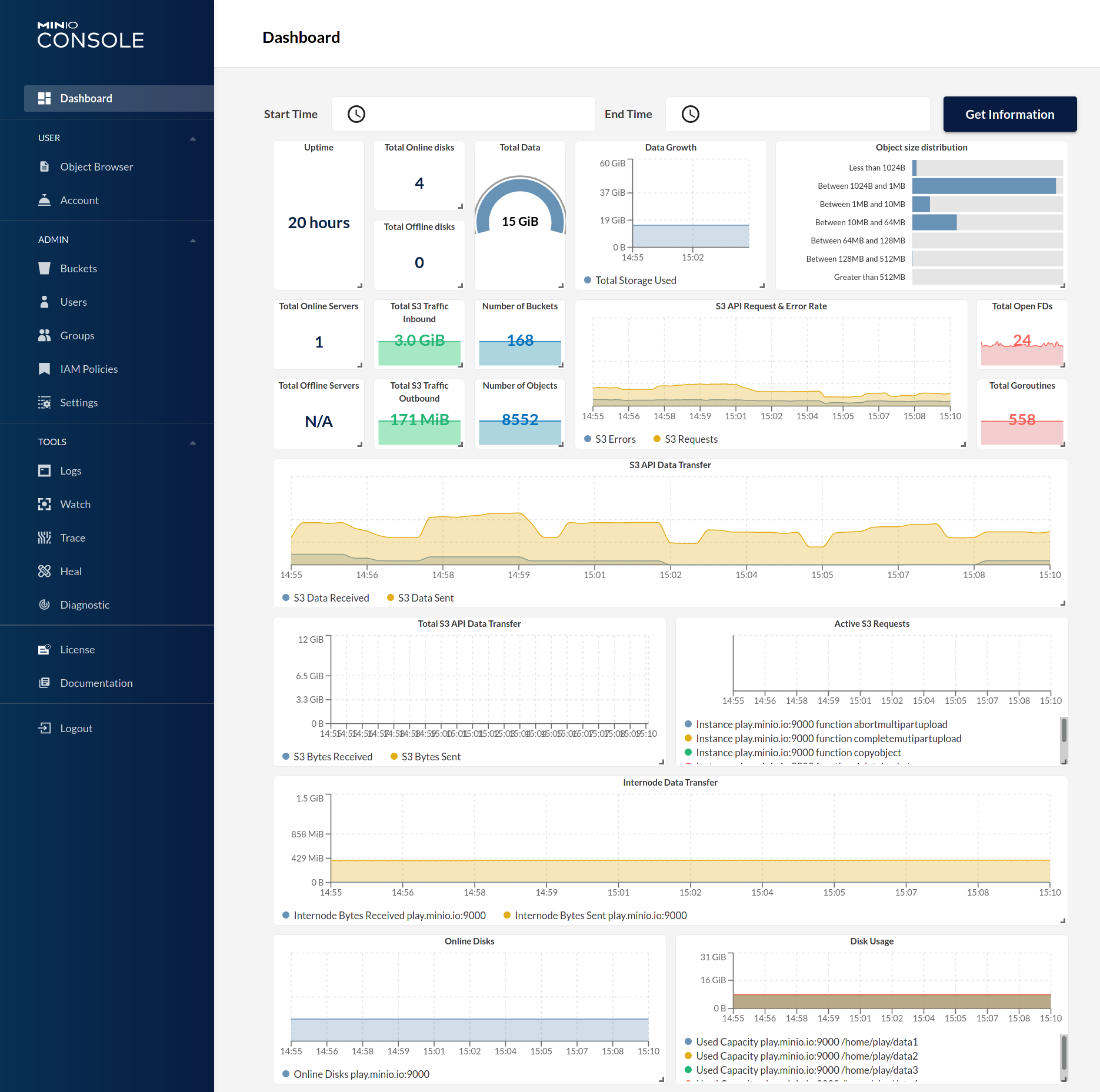

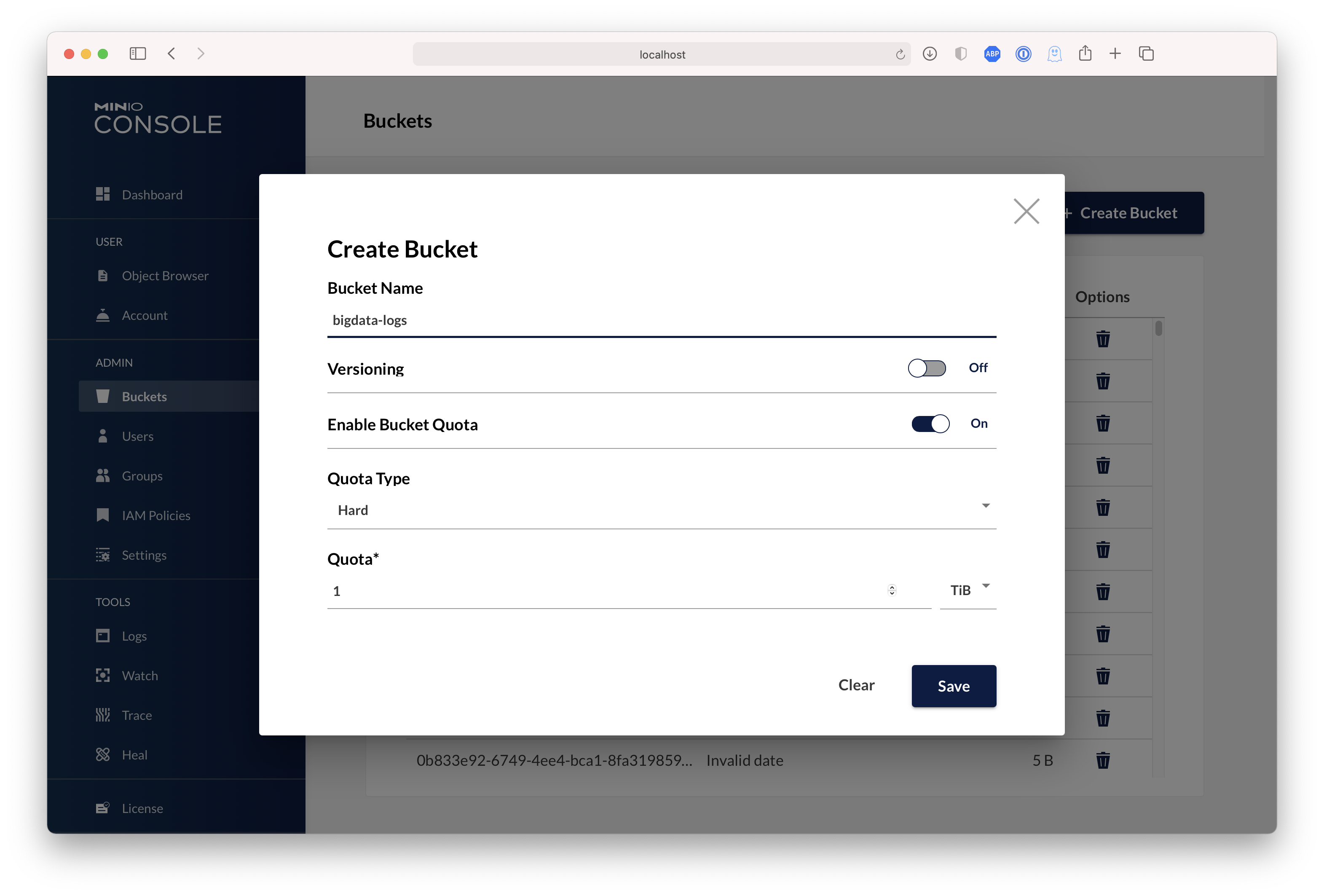

| Dashboard | Creating a bucket |

|

||||

| ------------- | ------------- |

|

||||

|  |  |

|

||||

|

||||

## Test using MinIO Client `mc`

|

||||

|

||||

`mc` provides a modern alternative to UNIX commands like ls, cat, cp, mirror, diff etc. It supports filesystems and Amazon S3 compatible cloud storage services. Follow the MinIO Client [Quickstart Guide](https://min.io/docs/minio/linux/reference/minio-mc.html#quickstart) for further instructions.

|

||||

|

||||

## Upgrading MinIO

|

||||

|

||||

Upgrades require zero downtime in MinIO, all upgrades are non-disruptive, all transactions on MinIO are atomic. So upgrading all the servers simultaneously is the recommended way to upgrade MinIO.

|

||||

|

||||

> NOTE: requires internet access to update directly from <https://dl.min.io>, optionally you can host any mirrors at <https://my-artifactory.example.com/minio/>

|

||||

|

||||

- For deployments that installed the MinIO server binary by hand, use [`mc admin update`](https://min.io/docs/minio/linux/reference/minio-mc-admin/mc-admin-update.html)

|

||||

The following commands set a local alias, validate the server information, create a bucket, copy data to that bucket, and list the contents of the bucket.

|

||||

|

||||

```sh

|

||||

mc admin update <minio alias, e.g., myminio>

|

||||

mc alias set local http://localhost:9000 minioadmin minioadmin

|

||||

mc admin info

|

||||

mc mb data

|

||||

mc cp ~/Downloads/mydata data/

|

||||

mc ls data/

|

||||

```

|

||||

|

||||

- For deployments without external internet access (e.g. airgapped environments), download the binary from <https://dl.min.io> and replace the existing MinIO binary let's say for example `/opt/bin/minio`, apply executable permissions `chmod +x /opt/bin/minio` and proceed to perform `mc admin service restart alias/`.

|

||||

|

||||

- For installations using Systemd MinIO service, upgrade via RPM/DEB packages **parallelly** on all servers or replace the binary lets say `/opt/bin/minio` on all nodes, apply executable permissions `chmod +x /opt/bin/minio` and process to perform `mc admin service restart alias/`.

|

||||

|

||||

### Upgrade Checklist

|

||||

|

||||

- Test all upgrades in a lower environment (DEV, QA, UAT) before applying to production. Performing blind upgrades in production environments carries significant risk.

|

||||

- Read the release notes for MinIO *before* performing any upgrade, there is no forced requirement to upgrade to latest release upon every release. Some release may not be relevant to your setup, avoid upgrading production environments unnecessarily.

|

||||

- If you plan to use `mc admin update`, MinIO process must have write access to the parent directory where the binary is present on the host system.

|

||||

- `mc admin update` is not supported and should be avoided in kubernetes/container environments, please upgrade containers by upgrading relevant container images.

|

||||

- **We do not recommend upgrading one MinIO server at a time, the product is designed to support parallel upgrades please follow our recommended guidelines.**

|

||||

Follow the MinIO Client [Quickstart Guide](https://docs.min.io/community/minio-object-store/reference/minio-mc.html#quickstart) for further instructions.

|

||||

|

||||

## Explore Further

|

||||

|

||||

- [MinIO Erasure Code Overview](https://min.io/docs/minio/linux/operations/concepts/erasure-coding.html)

|

||||

- [Use `mc` with MinIO Server](https://min.io/docs/minio/linux/reference/minio-mc.html)

|

||||

- [Use `minio-go` SDK with MinIO Server](https://min.io/docs/minio/linux/developers/go/minio-go.html)

|

||||

- [The MinIO documentation website](https://min.io/docs/minio/linux/index.html)

|

||||

- [The MinIO documentation website](https://docs.min.io/community/minio-object-store/index.html)

|

||||

- [MinIO Erasure Code Overview](https://docs.min.io/community/minio-object-store/operations/concepts/erasure-coding.html)

|

||||

- [Use `mc` with MinIO Server](https://docs.min.io/community/minio-object-store/reference/minio-mc.html)

|

||||

- [Use `minio-go` SDK with MinIO Server](https://docs.min.io/enterprise/aistor-object-store/developers/sdk/go/)

|

||||

|

||||

## Contribute to MinIO Project

|

||||

|

||||

Please follow MinIO [Contributor's Guide](https://github.com/minio/minio/blob/master/CONTRIBUTING.md)

|

||||

Please follow MinIO [Contributor's Guide](https://github.com/minio/minio/blob/master/CONTRIBUTING.md) for guidance on making new contributions to the repository.

|

||||

|

||||

## License

|

||||

|

||||

|

||||

@@ -74,11 +74,11 @@ check_minimum_version() {

|

||||

|

||||

assert_is_supported_arch() {

|

||||

case "${ARCH}" in

|

||||

x86_64 | amd64 | aarch64 | ppc64le | arm* | s390x | loong64 | loongarch64)

|

||||

x86_64 | amd64 | aarch64 | ppc64le | arm* | s390x | loong64 | loongarch64 | riscv64)

|

||||

return

|

||||

;;

|

||||

*)

|

||||

echo "Arch '${ARCH}' is not supported. Supported Arch: [x86_64, amd64, aarch64, ppc64le, arm*, s390x, loong64, loongarch64]"

|

||||

echo "Arch '${ARCH}' is not supported. Supported Arch: [x86_64, amd64, aarch64, ppc64le, arm*, s390x, loong64, loongarch64, riscv64]"

|

||||

exit 1

|

||||

;;

|

||||

esac

|

||||

|

||||

@@ -9,7 +9,7 @@ function _init() {

|

||||

export CGO_ENABLED=0

|

||||

|

||||

## List of architectures and OS to test coss compilation.

|

||||

SUPPORTED_OSARCH="linux/ppc64le linux/mips64 linux/amd64 linux/arm64 linux/s390x darwin/arm64 darwin/amd64 freebsd/amd64 windows/amd64 linux/arm linux/386 netbsd/amd64 linux/mips openbsd/amd64"

|

||||

SUPPORTED_OSARCH="linux/ppc64le linux/mips64 linux/amd64 linux/arm64 linux/s390x darwin/arm64 darwin/amd64 freebsd/amd64 windows/amd64 linux/arm linux/386 netbsd/amd64 linux/mips openbsd/amd64 linux/riscv64"

|

||||

}

|

||||

|

||||

function _build() {

|

||||

|

||||

@@ -193,27 +193,27 @@ func (a adminAPIHandlers) SetConfigKVHandler(w http.ResponseWriter, r *http.Requ

|

||||

func setConfigKV(ctx context.Context, objectAPI ObjectLayer, kvBytes []byte) (result setConfigResult, err error) {

|

||||

result.Cfg, err = readServerConfig(ctx, objectAPI, nil)

|

||||

if err != nil {

|

||||

return

|

||||

return result, err

|

||||

}

|

||||

|

||||

result.Dynamic, err = result.Cfg.ReadConfig(bytes.NewReader(kvBytes))

|

||||

if err != nil {

|

||||

return

|

||||

return result, err

|

||||

}

|

||||

|

||||

result.SubSys, _, _, err = config.GetSubSys(string(kvBytes))

|

||||

if err != nil {

|

||||

return

|

||||

return result, err

|

||||

}

|

||||

|

||||

tgts, err := config.ParseConfigTargetID(bytes.NewReader(kvBytes))

|

||||

if err != nil {

|

||||

return

|

||||

return result, err

|

||||

}

|

||||

ctx = context.WithValue(ctx, config.ContextKeyForTargetFromConfig, tgts)

|

||||

if verr := validateConfig(ctx, result.Cfg, result.SubSys); verr != nil {

|

||||

err = badConfigErr{Err: verr}

|

||||

return

|

||||

return result, err

|

||||

}

|

||||

|

||||

// Check if subnet proxy being set and if so set the same value to proxy of subnet

|

||||

@@ -222,12 +222,12 @@ func setConfigKV(ctx context.Context, objectAPI ObjectLayer, kvBytes []byte) (re

|

||||

|

||||

// Update the actual server config on disk.

|

||||

if err = saveServerConfig(ctx, objectAPI, result.Cfg); err != nil {

|

||||

return

|

||||

return result, err

|

||||

}

|

||||

|

||||

// Write the config input KV to history.

|

||||

err = saveServerConfigHistory(ctx, objectAPI, kvBytes)

|

||||

return

|

||||

return result, err

|

||||

}

|

||||

|

||||

// GetConfigKVHandler - GET /minio/admin/v3/get-config-kv?key={key}

|

||||

|

||||

@@ -445,8 +445,10 @@ func (a adminAPIHandlers) ListAccessKeysLDAP(w http.ResponseWriter, r *http.Requ

|

||||

for _, svc := range serviceAccounts {

|

||||

expiryTime := svc.Expiration

|

||||

serviceAccountList = append(serviceAccountList, madmin.ServiceAccountInfo{

|

||||

AccessKey: svc.AccessKey,

|

||||

Expiration: &expiryTime,

|

||||

AccessKey: svc.AccessKey,

|

||||

Expiration: &expiryTime,

|

||||

Name: svc.Name,

|

||||

Description: svc.Description,

|

||||

})

|

||||

}

|

||||

for _, sts := range stsKeys {

|

||||

@@ -625,8 +627,10 @@ func (a adminAPIHandlers) ListAccessKeysLDAPBulk(w http.ResponseWriter, r *http.

|

||||

}

|

||||

for _, svc := range serviceAccounts {

|

||||

accessKeys.ServiceAccounts = append(accessKeys.ServiceAccounts, madmin.ServiceAccountInfo{

|

||||

AccessKey: svc.AccessKey,

|

||||

Expiration: &svc.Expiration,

|

||||

AccessKey: svc.AccessKey,

|

||||

Expiration: &svc.Expiration,

|

||||

Name: svc.Name,

|

||||

Description: svc.Description,

|

||||

})

|

||||

}

|

||||

// if only service accounts, skip if user has no service accounts

|

||||

|

||||

@@ -173,6 +173,8 @@ func (a adminAPIHandlers) ListAccessKeysOpenIDBulk(w http.ResponseWriter, r *htt

|

||||

if _, ok := accessKey.Claims[iamPolicyClaimNameOpenID()]; !ok {

|

||||

continue // skip if no roleArn and no policy claim

|

||||

}

|

||||

// claim-based provider is in the roleArnMap under dummy ARN

|

||||

arn = dummyRoleARN

|

||||

}

|

||||

matchingCfgName, ok := roleArnMap[arn]

|

||||

if !ok {

|

||||

|

||||

@@ -61,7 +61,7 @@ func (a adminAPIHandlers) StartDecommission(w http.ResponseWriter, r *http.Reque

|

||||

return

|

||||

}

|

||||

|

||||

if z.IsRebalanceStarted() {

|

||||

if z.IsRebalanceStarted(ctx) {

|

||||

writeErrorResponseJSON(ctx, w, errorCodes.ToAPIErr(ErrAdminRebalanceAlreadyStarted), r.URL)

|

||||

return

|

||||

}

|

||||

@@ -277,7 +277,7 @@ func (a adminAPIHandlers) RebalanceStart(w http.ResponseWriter, r *http.Request)

|

||||

return

|

||||

}

|

||||

|

||||

if pools.IsRebalanceStarted() {

|

||||

if pools.IsRebalanceStarted(ctx) {

|

||||

writeErrorResponseJSON(ctx, w, errorCodes.ToAPIErr(ErrAdminRebalanceAlreadyStarted), r.URL)

|

||||

return

|

||||

}

|

||||

@@ -380,7 +380,7 @@ func (a adminAPIHandlers) RebalanceStop(w http.ResponseWriter, r *http.Request)

|

||||

func proxyDecommissionRequest(ctx context.Context, defaultEndPoint Endpoint, w http.ResponseWriter, r *http.Request) (proxy bool) {

|

||||

host := env.Get("_MINIO_DECOM_ENDPOINT_HOST", defaultEndPoint.Host)

|

||||

if host == "" {

|

||||

return

|

||||

return proxy

|

||||

}

|

||||

for nodeIdx, proxyEp := range globalProxyEndpoints {

|

||||

if proxyEp.Host == host && !proxyEp.IsLocal {

|

||||

@@ -389,5 +389,5 @@ func proxyDecommissionRequest(ctx context.Context, defaultEndPoint Endpoint, w h

|

||||

}

|

||||

}

|

||||

}

|

||||

return

|

||||

return proxy

|

||||

}

|

||||

|

||||

@@ -70,7 +70,7 @@ func (a adminAPIHandlers) SiteReplicationAdd(w http.ResponseWriter, r *http.Requ

|

||||

|

||||

func getSRAddOptions(r *http.Request) (opts madmin.SRAddOptions) {

|

||||

opts.ReplicateILMExpiry = r.Form.Get("replicateILMExpiry") == "true"

|

||||

return

|

||||

return opts

|

||||

}

|

||||

|

||||

// SRPeerJoin - PUT /minio/admin/v3/site-replication/join

|

||||

@@ -304,7 +304,7 @@ func (a adminAPIHandlers) SRPeerGetIDPSettings(w http.ResponseWriter, r *http.Re

|

||||

}

|

||||

}

|

||||

|

||||

func parseJSONBody(ctx context.Context, body io.Reader, v interface{}, encryptionKey string) error {

|

||||

func parseJSONBody(ctx context.Context, body io.Reader, v any, encryptionKey string) error {

|

||||

data, err := io.ReadAll(body)

|

||||

if err != nil {

|

||||

return SRError{

|

||||

@@ -422,7 +422,7 @@ func (a adminAPIHandlers) SiteReplicationEdit(w http.ResponseWriter, r *http.Req

|

||||

func getSREditOptions(r *http.Request) (opts madmin.SREditOptions) {

|

||||

opts.DisableILMExpiryReplication = r.Form.Get("disableILMExpiryReplication") == "true"

|

||||

opts.EnableILMExpiryReplication = r.Form.Get("enableILMExpiryReplication") == "true"

|

||||

return

|

||||

return opts

|

||||

}

|

||||

|

||||

// SRPeerEdit - PUT /minio/admin/v3/site-replication/peer/edit

|

||||

@@ -484,7 +484,7 @@ func getSRStatusOptions(r *http.Request) (opts madmin.SRStatusOptions) {

|

||||

opts.EntityValue = q.Get("entityvalue")

|

||||

opts.ShowDeleted = q.Get("showDeleted") == "true"

|

||||

opts.Metrics = q.Get("metrics") == "true"

|

||||

return

|

||||

return opts

|

||||

}

|

||||

|

||||

// SiteReplicationRemove - PUT /minio/admin/v3/site-replication/remove

|

||||

|

||||

@@ -89,7 +89,7 @@ func (s *TestSuiteIAM) TestDeleteUserRace(c *check) {

|

||||

|

||||

// Create a policy policy

|

||||

policy := "mypolicy"

|

||||

policyBytes := []byte(fmt.Sprintf(`{

|

||||

policyBytes := fmt.Appendf(nil, `{

|

||||

"Version": "2012-10-17",

|

||||

"Statement": [

|

||||

{

|

||||

@@ -104,7 +104,7 @@ func (s *TestSuiteIAM) TestDeleteUserRace(c *check) {

|

||||

]

|

||||

}

|

||||

]

|

||||

}`, bucket))

|

||||

}`, bucket)

|

||||

err = s.adm.AddCannedPolicy(ctx, policy, policyBytes)

|

||||

if err != nil {

|

||||

c.Fatalf("policy add error: %v", err)

|

||||

@@ -113,7 +113,7 @@ func (s *TestSuiteIAM) TestDeleteUserRace(c *check) {

|

||||

userCount := 50

|

||||

accessKeys := make([]string, userCount)

|

||||

secretKeys := make([]string, userCount)

|

||||

for i := 0; i < userCount; i++ {

|

||||

for i := range userCount {

|

||||

accessKey, secretKey := mustGenerateCredentials(c)

|

||||

err = s.adm.SetUser(ctx, accessKey, secretKey, madmin.AccountEnabled)

|

||||

if err != nil {

|

||||

@@ -133,7 +133,7 @@ func (s *TestSuiteIAM) TestDeleteUserRace(c *check) {

|

||||

}

|

||||

|

||||

g := errgroup.Group{}

|

||||

for i := 0; i < userCount; i++ {

|

||||

for i := range userCount {

|

||||

g.Go(func(i int) func() error {

|

||||

return func() error {

|

||||

uClient := s.getUserClient(c, accessKeys[i], secretKeys[i], "")

|

||||

|

||||

@@ -24,6 +24,7 @@ import (

|

||||

"errors"

|

||||

"fmt"

|

||||

"io"

|

||||

"maps"

|

||||

"net/http"

|

||||

"os"

|

||||

"slices"

|

||||

@@ -157,9 +158,7 @@ func (a adminAPIHandlers) ListUsers(w http.ResponseWriter, r *http.Request) {

|

||||

writeErrorResponseJSON(ctx, w, toAdminAPIErr(ctx, err), r.URL)

|

||||

return

|

||||

}

|

||||

for k, v := range ldapUsers {

|

||||

allCredentials[k] = v

|

||||

}

|

||||

maps.Copy(allCredentials, ldapUsers)

|

||||

|

||||

// Marshal the response

|

||||

data, err := json.Marshal(allCredentials)

|

||||

@@ -1827,16 +1826,18 @@ func (a adminAPIHandlers) SetPolicyForUserOrGroup(w http.ResponseWriter, r *http

|

||||

iamLogIf(ctx, err)

|

||||

} else if foundGroupDN == nil || !underBaseDN {

|

||||

err = errNoSuchGroup

|

||||

} else {

|

||||

entityName = foundGroupDN.NormDN

|

||||

}

|

||||

entityName = foundGroupDN.NormDN

|

||||

} else {

|

||||

var foundUserDN *xldap.DNSearchResult

|

||||

if foundUserDN, err = globalIAMSys.LDAPConfig.GetValidatedDNForUsername(entityName); err != nil {

|

||||

iamLogIf(ctx, err)

|

||||

} else if foundUserDN == nil {

|

||||

err = errNoSuchUser

|

||||

} else {

|

||||

entityName = foundUserDN.NormDN

|

||||

}

|

||||

entityName = foundUserDN.NormDN

|

||||

}

|

||||

if err != nil {

|

||||

writeErrorResponseJSON(ctx, w, toAdminAPIErr(ctx, err), r.URL)

|

||||

@@ -2947,7 +2948,7 @@ func commonAddServiceAccount(r *http.Request, ldap bool) (context.Context, auth.

|

||||

name: createReq.Name,

|

||||

description: description,

|

||||

expiration: createReq.Expiration,

|

||||

claims: make(map[string]interface{}),

|

||||

claims: make(map[string]any),

|

||||

}

|

||||

|

||||

condValues := getConditionValues(r, "", cred)

|

||||

|

||||

@@ -208,6 +208,8 @@ func TestIAMInternalIDPServerSuite(t *testing.T) {

|

||||

suite.TestGroupAddRemove(c)

|

||||

suite.TestServiceAccountOpsByAdmin(c)

|

||||

suite.TestServiceAccountPrivilegeEscalationBug(c)

|

||||

suite.TestServiceAccountPrivilegeEscalationBug2_2025_10_15(c, true)

|

||||

suite.TestServiceAccountPrivilegeEscalationBug2_2025_10_15(c, false)

|

||||

suite.TestServiceAccountOpsByUser(c)

|

||||

suite.TestServiceAccountDurationSecondsCondition(c)

|

||||

suite.TestAddServiceAccountPerms(c)

|

||||

@@ -332,7 +334,7 @@ func (s *TestSuiteIAM) TestUserPolicyEscalationBug(c *check) {

|

||||

|

||||

// 2.2 create and associate policy to user

|

||||

policy := "mypolicy-test-user-update"

|

||||

policyBytes := []byte(fmt.Sprintf(`{

|

||||

policyBytes := fmt.Appendf(nil, `{

|

||||

"Version": "2012-10-17",

|

||||

"Statement": [

|

||||

{

|

||||

@@ -355,7 +357,7 @@ func (s *TestSuiteIAM) TestUserPolicyEscalationBug(c *check) {

|

||||

]

|

||||

}

|

||||

]

|

||||

}`, bucket, bucket))

|

||||

}`, bucket, bucket)

|

||||

err = s.adm.AddCannedPolicy(ctx, policy, policyBytes)

|

||||

if err != nil {

|

||||

c.Fatalf("policy add error: %v", err)

|

||||

@@ -562,7 +564,7 @@ func (s *TestSuiteIAM) TestPolicyCreate(c *check) {

|

||||

|

||||

// 1. Create a policy

|

||||

policy := "mypolicy"

|

||||

policyBytes := []byte(fmt.Sprintf(`{

|

||||

policyBytes := fmt.Appendf(nil, `{

|

||||

"Version": "2012-10-17",

|

||||

"Statement": [

|

||||

{

|

||||

@@ -585,7 +587,7 @@ func (s *TestSuiteIAM) TestPolicyCreate(c *check) {

|

||||

]

|

||||

}

|

||||

]

|

||||

}`, bucket, bucket))

|

||||

}`, bucket, bucket)

|

||||

err = s.adm.AddCannedPolicy(ctx, policy, policyBytes)

|

||||

if err != nil {

|

||||

c.Fatalf("policy add error: %v", err)

|

||||

@@ -680,7 +682,7 @@ func (s *TestSuiteIAM) TestCannedPolicies(c *check) {

|

||||

c.Fatalf("bucket creat error: %v", err)

|

||||

}

|

||||

|

||||

policyBytes := []byte(fmt.Sprintf(`{

|

||||

policyBytes := fmt.Appendf(nil, `{

|

||||

"Version": "2012-10-17",

|

||||

"Statement": [

|

||||

{

|

||||

@@ -703,7 +705,7 @@ func (s *TestSuiteIAM) TestCannedPolicies(c *check) {

|

||||

]

|

||||

}

|

||||

]

|

||||

}`, bucket, bucket))

|

||||

}`, bucket, bucket)

|

||||

|

||||

// Check that default policies can be overwritten.

|

||||

err = s.adm.AddCannedPolicy(ctx, "readwrite", policyBytes)

|

||||

@@ -739,7 +741,7 @@ func (s *TestSuiteIAM) TestGroupAddRemove(c *check) {

|

||||

}

|

||||

|

||||

policy := "mypolicy"

|

||||

policyBytes := []byte(fmt.Sprintf(`{

|

||||

policyBytes := fmt.Appendf(nil, `{

|

||||

"Version": "2012-10-17",

|

||||

"Statement": [

|

||||

{

|

||||

@@ -762,7 +764,7 @@ func (s *TestSuiteIAM) TestGroupAddRemove(c *check) {

|

||||

]

|

||||

}

|

||||

]

|

||||

}`, bucket, bucket))

|

||||

}`, bucket, bucket)

|

||||

err = s.adm.AddCannedPolicy(ctx, policy, policyBytes)

|

||||

if err != nil {

|

||||

c.Fatalf("policy add error: %v", err)

|

||||

@@ -911,7 +913,7 @@ func (s *TestSuiteIAM) TestServiceAccountOpsByUser(c *check) {

|

||||

|

||||

// Create policy, user and associate policy

|

||||

policy := "mypolicy"

|

||||

policyBytes := []byte(fmt.Sprintf(`{

|

||||

policyBytes := fmt.Appendf(nil, `{

|

||||

"Version": "2012-10-17",

|

||||

"Statement": [

|

||||

{

|

||||

@@ -934,7 +936,7 @@ func (s *TestSuiteIAM) TestServiceAccountOpsByUser(c *check) {

|

||||

]

|

||||

}

|

||||

]

|

||||

}`, bucket, bucket))

|

||||

}`, bucket, bucket)

|

||||

err = s.adm.AddCannedPolicy(ctx, policy, policyBytes)

|

||||

if err != nil {

|

||||

c.Fatalf("policy add error: %v", err)

|

||||

@@ -995,7 +997,7 @@ func (s *TestSuiteIAM) TestServiceAccountDurationSecondsCondition(c *check) {

|

||||

|

||||

// Create policy, user and associate policy

|

||||

policy := "mypolicy"

|

||||

policyBytes := []byte(fmt.Sprintf(`{

|

||||

policyBytes := fmt.Appendf(nil, `{

|

||||

"Version": "2012-10-17",

|

||||

"Statement": [

|

||||

{

|

||||

@@ -1026,7 +1028,7 @@ func (s *TestSuiteIAM) TestServiceAccountDurationSecondsCondition(c *check) {

|

||||

]

|

||||

}

|

||||

]

|

||||

}`, bucket, bucket))

|

||||

}`, bucket, bucket)

|

||||

err = s.adm.AddCannedPolicy(ctx, policy, policyBytes)

|

||||

if err != nil {

|

||||

c.Fatalf("policy add error: %v", err)

|

||||

@@ -1093,7 +1095,7 @@ func (s *TestSuiteIAM) TestServiceAccountOpsByAdmin(c *check) {

|

||||

|

||||

// Create policy, user and associate policy

|

||||

policy := "mypolicy"

|

||||

policyBytes := []byte(fmt.Sprintf(`{

|

||||

policyBytes := fmt.Appendf(nil, `{

|

||||

"Version": "2012-10-17",

|

||||

"Statement": [

|

||||

{

|

||||

@@ -1116,7 +1118,7 @@ func (s *TestSuiteIAM) TestServiceAccountOpsByAdmin(c *check) {

|

||||

]

|

||||

}

|

||||

]

|

||||

}`, bucket, bucket))

|

||||

}`, bucket, bucket)

|

||||

err = s.adm.AddCannedPolicy(ctx, policy, policyBytes)

|

||||

if err != nil {

|

||||

c.Fatalf("policy add error: %v", err)

|

||||

@@ -1249,6 +1251,108 @@ func (s *TestSuiteIAM) TestServiceAccountPrivilegeEscalationBug(c *check) {

|

||||

}

|

||||

}

|

||||

|

||||

func (s *TestSuiteIAM) TestServiceAccountPrivilegeEscalationBug2_2025_10_15(c *check, forRoot bool) {

|

||||

ctx, cancel := context.WithTimeout(context.Background(), testDefaultTimeout)

|

||||

defer cancel()

|

||||

|

||||

for i := range 3 {

|

||||

err := s.client.MakeBucket(ctx, fmt.Sprintf("bucket%d", i+1), minio.MakeBucketOptions{})

|

||||

if err != nil {

|

||||

c.Fatalf("bucket create error: %v", err)

|

||||

}

|

||||

defer func(i int) {

|

||||

_ = s.client.RemoveBucket(ctx, fmt.Sprintf("bucket%d", i+1))

|

||||

}(i)

|

||||

}

|

||||

|

||||

allow2BucketsPolicyBytes := []byte(`{

|

||||

"Version": "2012-10-17",

|

||||

"Statement": [

|

||||

{

|

||||

"Sid": "ListBucket1AndBucket2",

|

||||

"Effect": "Allow",

|

||||

"Action": ["s3:ListBucket"],

|

||||

"Resource": ["arn:aws:s3:::bucket1", "arn:aws:s3:::bucket2"]

|

||||

},

|

||||

{

|

||||

"Sid": "ReadWriteBucket1AndBucket2Objects",

|

||||

"Effect": "Allow",

|

||||

"Action": [

|

||||

"s3:DeleteObject",

|

||||

"s3:DeleteObjectVersion",

|

||||

"s3:GetObject",

|

||||

"s3:GetObjectVersion",

|

||||

"s3:PutObject"

|

||||

],

|

||||

"Resource": ["arn:aws:s3:::bucket1/*", "arn:aws:s3:::bucket2/*"]

|

||||

}

|

||||

]

|

||||

}`)

|

||||

|

||||

if forRoot {

|

||||

// Create a service account for the root user.

|

||||

_, err := s.adm.AddServiceAccount(ctx, madmin.AddServiceAccountReq{

|

||||

Policy: allow2BucketsPolicyBytes,

|

||||

AccessKey: "restricted",

|

||||

SecretKey: "restricted123",

|

||||

})

|

||||

if err != nil {

|

||||

c.Fatalf("could not create service account")

|

||||

}

|

||||

defer func() {

|

||||

_ = s.adm.DeleteServiceAccount(ctx, "restricted")

|

||||

}()

|

||||

} else {

|

||||

// Create a regular user and attach consoleAdmin policy

|

||||

err := s.adm.AddUser(ctx, "foobar", "foobar123")

|

||||

if err != nil {

|

||||

c.Fatalf("could not create user")

|

||||

}

|

||||

|

||||

_, err = s.adm.AttachPolicy(ctx, madmin.PolicyAssociationReq{

|

||||

Policies: []string{"consoleAdmin"},

|

||||

User: "foobar",

|

||||

})

|

||||

if err != nil {

|

||||

c.Fatalf("could not attach policy")

|

||||

}

|

||||

|

||||

// Create a service account for the regular user.

|

||||

_, err = s.adm.AddServiceAccount(ctx, madmin.AddServiceAccountReq{

|

||||

Policy: allow2BucketsPolicyBytes,

|

||||

TargetUser: "foobar",

|

||||

AccessKey: "restricted",

|

||||

SecretKey: "restricted123",

|

||||

})